I just Googled “Big Data” and I got 19,600,000 results… Where there was virtually nothing about two years ago there is now unprecedented hype. While the most serious sources are IBM, McKinsey, and O’Reilly, most articles are marketing rants, uninformed opinions or plain wrong. I had to ask myself… what does it mean for the digital analyst?

Below is a figure from Google Trends showing the growth of search interest for “big data” as compared to “web analytics” and “business intelligence.”

Big Data – Hype

It’s not surprising Gartner position “Big Data” between “social TV” and “mobile robots”, midway toward the peak of inflated expectations – two to five years before reaching a more mature stage. The number of products boasting the “big data” mantra is exploding and mass-media is entering the fray as exemplified by the New York Times article “The Age of Big Data” and a series on Forbes entitled “Big Data Technology Evaluation Checklist”.

On the brighter side, concepts of Big Data are spurring cultural shifts within organizations, challenging outdated “business intelligence” approaches and raising awareness of “analytics” in general.

Innovative technologies being built for Big Data can readily apply to environments such as digital analytics. It might be worth noting there seems to be a diminishing interest in traditional web analytics as organizations mature toward broader, more complex and more value-add through advanced business analysis.

Big Data – Definition

There is no universal definition of what constitutes “Big Data” and Wikipedia offers only a very weak and incomplete one: “Big data is a term applied to data sets whose size is beyond the ability of commonly used software tools to capture, manage, and process the data within a tolerable elapsed time”.

IBM offers a good, simple overview:

Big data spans three dimensions: Volume, Velocity and Variety.

- Volume – Big data comes in one size: large. Enterprises are awash with data, easily amassing terabytes and even petabytes of information.

- Velocity – Often time-sensitive, big data must be used as it is streaming in to the enterprise in order to maximize its value to the business.

- Variety – Big data extends beyond structured data, including unstructured data of all varieties: text, audio, video, click streams, log files and more.

Bryan Smith of MSDN adds a fourth V:

- Variability – Defined as the differing ways in which the data may be interpreted. Differing questions require differing interpretations.

Big Data – Technological Perspective

Big Data encompasses several aspects also commonly found in business intelligence: data capture, storage, search, sharing, analytics and visualization. In his book entitled “Big Data Glossary”, Pete Warden covers sixty innovations and provides a brief overview of technological concepts relevant to Big Data.

- Acquisition: Refers to the various data sources, internal or external, structured or not. “Most of the interesting public data sources are poorly structured, full of noise, and hard to access.”

Technologies: Google Refine, Needlebase, ScraperWiki, BloomReach. - Serialization: “As you work on turning your data into something useful, it will have to pass between various systems and probably be stored in files at various points. These operations all require some kind of serialization, especially since different stages of your processing are likely to require different languages and APIs. When you’re dealing with very large numbers of records, the choices you make about how to represent and store them can have a massive impact on your storage requirements and performance.

Technologies: JSON, BSON, Thrift, Avro, Google Protocol Buffers. - Storage: “Large-scale data processing operations access data in a way that traditional file systems are not designed for. Data tends to be written and read in large batches, multiple megabytes at once. Efficiency is a higher priority than features like directories that help organize information in a user-friendly way. The massive size of the data also means that it needs to be stored across multiple machines in a distributed way.”

Technologies: Amazon S3, Hadoop Distributed File System. - Servers: “The cloud” is a very vague term, but there’s been a real change in the availability of computing resources. Rather than the purchase or long-term leasing of a physical machine that used to be the norm, now it’s much more common to rent computers that are being run as virtual instances. This makes it economical for the provider to offer very short-term rentals of flexible numbers of machines, which is ideal for a lot of data processing applications. Being able to quickly fire up a large cluster makes it possible to deal with very big data problems on a small budget.”

Technologies: Amazon EC2, Google App Engine, Amazon Elastic Beanstalk, Heroku. - NoSQL: In computing, NoSQL (which really means “not only SQL”) is a broad class of database management systems that differ from the classic model of the relational database management system (RDBMS) in some significant ways, most important being they do not use SQL as their primary query language. These data stores may not require fixed table schemas, usually do not support join operations, may not give full ACID (atomicity, consistency, isolation, durability) guarantees, and typically scale horizontally (i.e. by adding new servers and spreading the workload rather than upgrading existing servers).

Technologies: Apache Hadoop, Apache Casandra, MongoDB, Apache CouchDB, Redis, BigTable, HBase, Hypertable, Voldemort. See http://nosql-database.org/ for a complete list. - MapReduce: “In the traditional relational database world, all processing happens after the information has been loaded into the store, using a specialized query language on highly structured and optimized data structures. The approach pioneered by Google, and adopted by many other web companies, is to instead create a pipeline that reads and writes to arbitrary file formats, with intermediate results being passed between stages as files, with the computation spread across many machines.”

Technologies: Hadoop & Hive, Pig, Cascading, Cascalog, mrjob, Caffeine, S4, MapR, Acunu, Flume, Kafka, Azkaban, Oozie, Greenplum. - Processing: “Getting the concise, valuable information you want from a sea of data can be challenging, but there’s been a lot of progress around systems that help you turn your datasets into something that makes sense. Because there are so many different barriers, the tools range from rapid statistical analysis systems to enlisting human helpers.”

Technologies: R, Yahoo! Pipes, Mechanical Turk, Solr/Lucene, ElasticSearch, Datameer, Bigsheets, Tinkerpop.

Startups: Continuuity, Wibidata, Platfora. - Natural Language Processing: “Natural language processing (NLP) … focus is taking messy, human-created text and extracting meaningful information.”

Technologies: Natural Language Toolkit, Apache OpenNLP, Boilerpipe, OpenCalais. - Machine Learning: “Machine learning systems automate decision making on data. They use training information to deal with subsequent data points, automatically producing outputs like recommendations or groupings. These systems are especially useful when you want to turn the results of a one-off data analysis into a production service that will perform something similar on new data without supervision. Some of the most famous uses of these techniques are features like Amazon’s product recommendations.”

Technologies: WEKA, Mahout, scikits.learn, SkyTree. - Visualization: “One of the best ways to communicate the meaning of data is by extracting the important parts and presenting them graphically. This is helpful both for internal use, as an exploration technique to spot patterns that aren’t obvious from the raw values, and as a way to succinctly present end users with understandable results. As the Web has turned graphs from static images to interactive objects, the lines between presentation and exploration have blurred.”

Technologies: GraphViz, Processing, Protovis, Google Fusion Tables, Tableau Software.

Big Data – Challenges

Big Data was discussed at the recent World Economic Forum, where they identified several opportunities where Big Data can be applied, but also two main concerns and obstacles on the path of data commons.

1. Privacy and Security

As Craig & Ludloff puts it in “Privacy and Big Data”, the conditions for a perfect storm are being set and Big Data blankets many aspects of right to privacy, Big Brother, international regulations, right to privacy vs security vs commodity, the impact on marketing and advertising…

Just think about EU cookie regulations, or more simply, a startup scavenging the social web to build very complete profiles of people – with email, name, location, interests and such. Scary! (I will share this little story in an upcoming post).

2. Human Capital

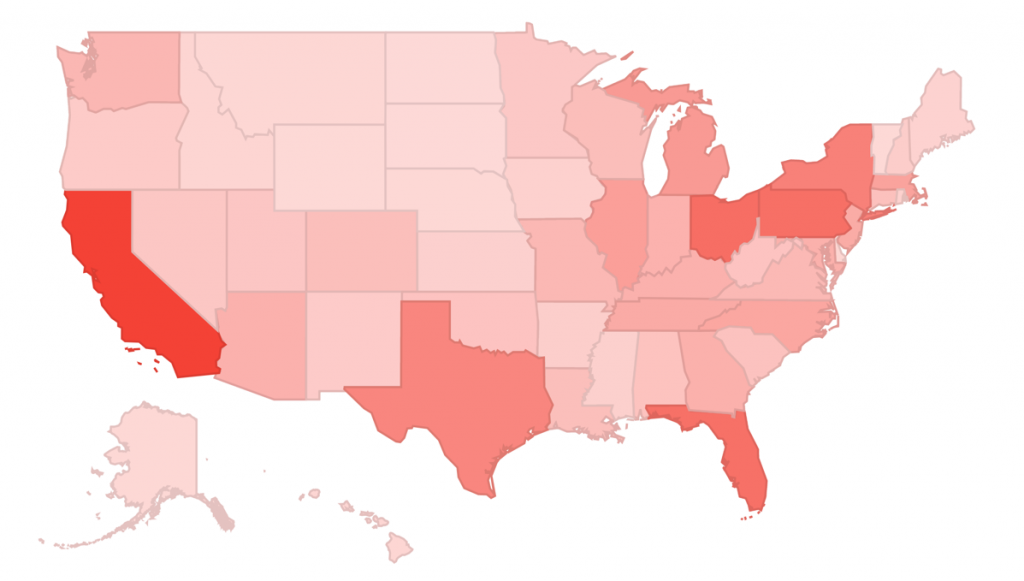

McKinsey Global Institute projects that the US will need 140,000 to 190,000 more workers with “deep analytics” expertise and 1.5M more data-literate managers.

Finding skilled “web analytics” resources is a challenge, and considering the height of the steps to reach serious analytics skills, this is certainly the other big challenge.

Big Data – Value Creation

All sources mention value creation, competitive advantage and productivity gains. There are five broad ways in which using big data can create value.

- Transparency: Making data accessible to relevant stakeholders in a timely manner.

- Experimentation: Enabling experimentation to discover needs, expose variability, and improve performance. As more transactional data is stored in digital form, organizations can collect more accurate and detailed performance data.

- Segmentation: More granular segmentation of populations can lead to customize actions.

- Decision Support: Replacing/supporting human decision making with automated algorithms which can improve decision making, minimize risks, and uncover valuable insights that would otherwise remain hidden.

- Innovation: Big Data enables companies to create new products and services, enhance existing ones, and invent or refine business models.

- Industry Sectors Growth: Each of those important outcomes can only become reality if sufficient and properly trained human capital is available.

Areas Of Opportunities For Digital Analysts

With the evolution from “web analytics” to “digital intelligence”, there is no doubt digital analysts should gradually shift from website-centricity and channel specific tactics – as experts as we are – to a more strategic, business oriented and (Big) Data expertise.

The primary focus of digital analysts should not be on the lower-layers of infrastructure and tools development. The following points are strong areas of opportunities:

- Processing: Mastering the proper tools for efficient analysis under different conditions (different data sets, varied business environments, etc.). Although current web analysts we are undoubtedly experts at leveraging web analytics tools, most lack some broader expertise in business intelligence and statistical analysis tools such as Tableau, SAS, Cognos and such.

- NLP: Developing expertise in unstructured data analysis such as social media, call center logs and emails. From the perspective of Processing, the goal should be to identify and master some of the most appropriate tools in this space, be it social media sentiment analysis or more sophisticated platforms.

- Visualization: There is a clear opportunity for digital analysts to develop an expertise in areas of dashboarding and more broadly, data visualization techniques (not to be confused with the marketing frenzy of “infographics”).

Action plan

One of the greatest challenges will be to satisfy demand and supply of skilled resources. The current base of “web analytics” is generally not sophisticated enough to really leverage Big Data; filling the skills gap will necessarily involve growing “web analysts” into “digital analysts”.

This is where you can help:

- Identify thought leaders;

- Identify skills gap;

- Identify learning opportunities and curriculum;

- Share your thoughts, tools of the trade and tips with the community (through the Twitter #measure hashtag and @SHamelCP or reach me on Google+ as Stéphane Hamel).