In June 2013, Google announced a new feature that enables the export of unsampled data from Views (Profiles) directly into BigQuery. Unlimited in terms of rows, and with additional fields such as visit identifiers and timestamps, it’s the rawest form of Google Analytics data that has ever been available to digital managers and developers.

This opens up an opportunity for granular interactive analyses that can really take a business’ Analytics capabilities to the next level. For a quick overview and a business case of BigQuery Export, check out the launch video from Google I/O.

By connecting two independently successful Google products, developers and analysts are now able to leverage the awesome interactive query system that is BigQuery (originating from Dremel, Google’s internal querying system) to analyse their unsampled Google Analytics data.

In this post, I will walk you through how this feature is put together, what it looks like, and some important things to be aware of when considering BigQuery Export as part of your Analytics solution.

Eager to see a real life example right away? Check out the video below where I combine Google Analytics data with internal CRM data.

How Does It Work?

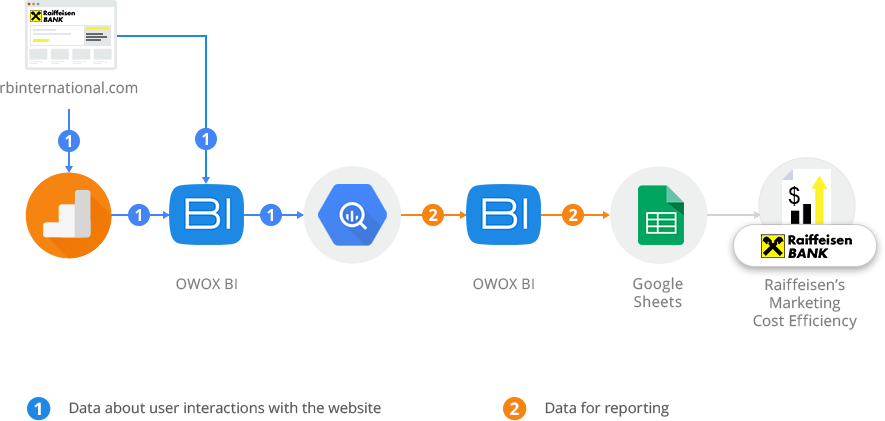

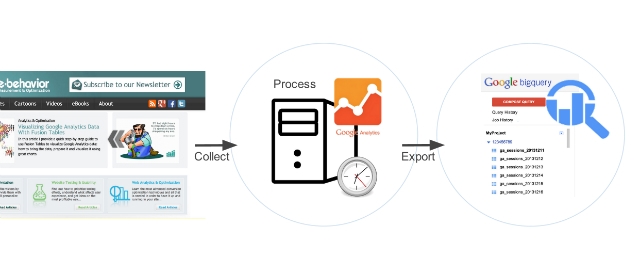

Let’s start by hashing out how this feature actually works. As data is collected from your website or application, in the shape of http requests, it flows through the Google Analytics servers and undergoes various stages of processing. Sessionization, such as the grouping of hits together into separate visits, and the application of the Filters you have set up, are examples of configurations that take place during these stages. Once the data is processed, it is made available in tables which are queried through, for example, the Google Analytics user interface. These are the basics of how the Analytics reports you are used to are populated with data.

Essentially, the BigQuery Export feature activates a mirror processing job during which this same data is also put into a designated BigQuery project. Once daily, currently at approximately 7am Pacific Time, this export job will commence for the previous day’s data (and generally completes within 2-4 hours). This means that you should expect Monday’s data to appear in your BigQuery project on Tuesday before noon (if you live on the US west coast).

Once in BigQuery, you can run SQL-like queries against multi-terabytes datasets in a matter of seconds, combine Google Analytics tables with tables from your data warehouse, and crunch hit- and session-level data in unprecedented detail.

What Does It Look Like?

Once a Google Analytics View is enabled for the export, a new dataset will be automatically created in your BigQuery project. The name of the dataset will be the View ID (the same as visible in the GA user interface under “View Settings”). A new table will be created for each exported day.

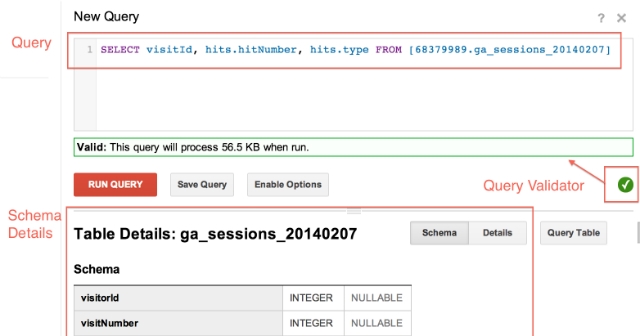

The schema, the structure in terms of fields available to include in your queries, are easily accessible directly in the BigQuery interface by clicking on a table. The schema is also documented in the Google Analytics help center.

The screenshot below shows the query field. The language is pretty intuitive even if you are unfamiliar with SQL: the current query means that we have selected to show visit ID, hit type, and hit number from all sessions in one particular table (representing one day’s data).

Tip #1: use the Validator to ensure that your query is valid before running it. This will save you a lot of time.

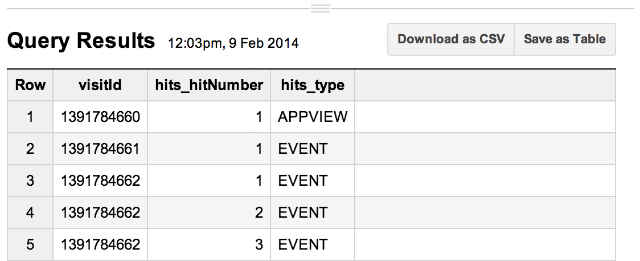

BigQuery returns the results below the query field. In the next screenshot, you can see the type of each hit, in which order that hit happened in the recorded session, and the ID for that session. Visible are three sessions; rows 3-5 are all within the same session since these hits share the same visit ID (remember, a visit is the same thing as a session, the words are synonymous).

Tip #2: Save your queries (using the “Save Query” button) to avoid the hassle of rebuilding them each time. Saved queries are available to you in the “Query History” section in the BigQuery UI

Food For Thought

Finally, I want to leave you with some useful frequently asked questions regarding this feature.

1. BigQuery Export is for Google Analytics Premium only?

The BigQuery Export feature is only available for Google Analytics Premium accounts (these are the only accounts that have access to unsampled data in the first place).

2. What are the row limits?

Good news: there are no row limits! Every single hit will be exported to these tables. Along with some extra fields, like visit ID’s and timestamps, this feature consequently gives the most granular access to unsampled Google Analytics data available.

3. How much does it cost?

Note that there are costs associated with BigQuery usage and storage (details here). To give you some perspective, a billion Google Analytics hits equals about a terabyte of data. A terabyte of data costs 80 dollars to store per month in BigQuery. For Google Analytics Premium pricing, contact Google.

4. Do View Filters apply when using BigQuery export?

Note that since the export job mirrors that of the processing job for the tables you normally access through the GA user interface, the same filter configurations will also apply to the data being exported to BigQuery.

5. Can I export data from multiple Views per property?

No. Only one View per property may be exported. You should consequently pick the View that gives you the most value in terms of data. Unless you are concerned about the size of the export, arguably the best View to export is therefore one of your master Views or a raw, completely unfiltered View.

6. BigQuery Export is a Firehose

It’s important to understand that once you enable this feature, the export job will run until disabled. This means that it will continuously fill your project with data, and there is no automatic deletion of old data. It is therefore a good idea to work out an internal process for how much data you want to store in BigQuery and regularly remove data that you no longer need to avoid unnecessary costs.

7. Classic vs. Universal Analytics

The feature can be enabled for both Classic and Universal Analytics Views (including App Views). However, fields which are only relevant for one of the platforms will not populate for the other. Custom Variables is one such example. These were replaced by Custom Dimension in Universal Analytics. Hence, if you export a Universal Analytics View, the Custom Variable field will be empty.

8. Should I use the Google Analytics API or BigQuery Export?

Without saying that one solution is necessarily better than the other (it depends on your own system and preferences), the BigQuery Export has several benefits. First of all, when using the API you are limited in terms of how many dimensions you can include in your query as you construct your reports. With the data in BigQuery there is no such limit, you can include whatever fields you want when you build a query (in fact, using * returns every single dimension and metric, although this would be an expensive query). Secondly, there are some additional fields that you cannot access through the API, such as the visitId or visitorId, which means the BigQuery Export data is even more granular. Lastly, even if you can match the automatic delivery of all sessions data into your own system, BigQuery is extremely powerful when it comes to rapidly processing multi-terabyte datasets that is hard to match for any BI system.

Closing Thoughts

BigQuery Export is fantastic news for anyone looking to crunch their unsampled Google Analytics data. From basic queries to advanced interactive analysis, the feature is extremely useful for anyone who wants to tap into granular, hit-level data. Although this is an Analytics Premium only feature, you may access a sample dataset by following the instructions here to try it out.

We are eager to hear your thoughts about BigQuery Export. If you got any particular use case you want to share, or just comment on the feature, please do so below!