[Last Updated on November 2013]

In this article I discuss Content Experiments, a tool that can be used to create A/B tests from inside Google Analytics. This tool has several advantages over the old Google Website Optimizer, especially if you are just starting the website testing journey. Content Experiments provide a quick way to test your main pages (landing pages, homepage, category pages) and it requires very few code implementations.

Here is a quick overview of the most prominent features that will help marketers get up and running with testing:

- Only the original page script will be necessary to run tests, the standard Google Analytics tracking code will be used to measure goals and variations.

- Website Goals define on Google Analytics can be used as the experiment goal, including AdSense revenue

- The Google Analytics segment builder can be used to segment results based on any segmentation criteria.

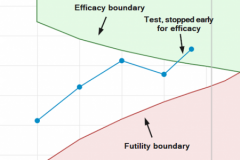

- Multi-armed Bandit approach: yields results faster than classical testing, at less cost, and with just as much statistical validity.

- Tests will automatically expire after 3 months to prevent leaving tests running if they are unlikely to have a statistically significant winner.

Below is a step-by-step guide on how to use Content Experiments to create A/B tests.

Creating Content Experiments

In order to create a new experiment, navigate to the Behavior section and click on the Experiments link on the sidebar. You will see a page that shows all your existing experiments. Above this table you will find a button Create experiment. You will then be asked the following information:

- Name for this experiment.

- Objective for this experiment: you can either choose an existing goal (including Ecommerce and AdSense metrics) or you can create a new goal.

- Percentage of traffic to experiment: the highest the percentage the quickest you will get significant results.

- Email notification for important changes: highly recommended!

- Distribute traffic evenly across all variations: if you turn this on, you will not get the benefits of Multi-armed Bandit approach mentioned above.

- Set a minimum time the experiment will run: defines a minimum period where Google Analytics will not declare a winner. If your website has significant seasonability or behavior patterns on weekends and weekdays (for example) this might be highly recommended; otherwise you might end up with a page optimized for only one of these segments.

- Set a confidence threshold: The higher the threshold, the more confident you can be in the result, but it also means the experiments will take longer to finish.

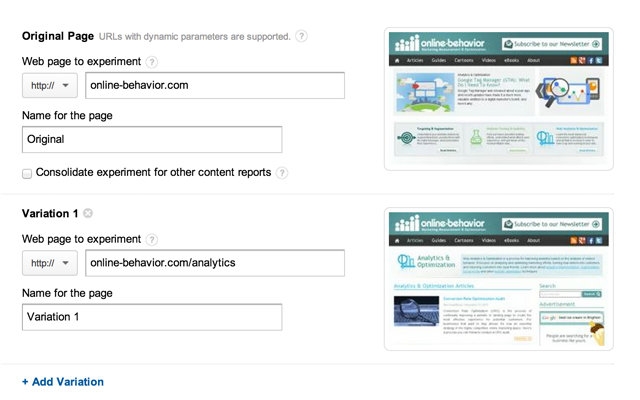

Once you define all the information above, click on it you will reach the following page.

In this page you can add all the URLs of your original page and the variations you would like to test. You will see thumbnails of the page, which helps you making sure the URLs are correct.

Click Next.

Setting Up The Content Experiment Code

In this section you simply have to choose to either implement by yourself the necessary code to run your test (in which case you should copy the code) or you will be given the option to send an email to whomever is implementing the code.

Click Next.

Validating And Confirming The Content Experiment Code

As mentioned above, you will need to implement one code in order to use this tool. In this step your pages will be verified. If the code is not found, you will see an error message.

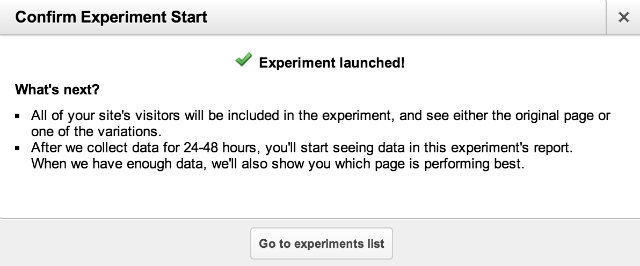

Note that you will be able to skip validation if you want, just click on Start Experiment. If you do so, you will see a popup with the following message: “Experiment validation had errors or did not complete. Are you sure you want to start the experiment? If you are sure that your experiment is properly set up, you may continue.” But it is recommended that you check the code to understand why you are getting an error and then try validating again.

Yay! You did it!

Content Experiment Results

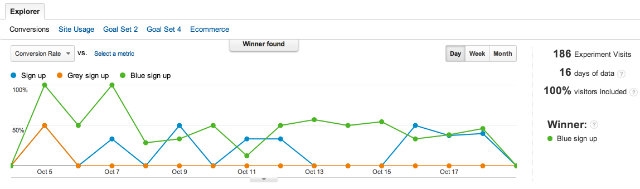

Once the Experiment is live, you will have the following options:

- Conversion Rate: gives you the option to check the test results using alternative metrics.

- Stop Experiment

- Re-validate

- Disable Variation

- Segmentation: as mentioned above, this is an extremely valuable feature, it enables you to understand better how each variation performs for each segment of visitors on your website.

And below we see the results page of a test with a winning version, the Blue Sign up (green line, yeah, that’s confusing :-), with a lift of 52% in conversions as compared to the original.

Reviewing All Experiments

Any time you want to review your experiments just visit http://onbe.co/IXznAO

What are you waiting for, start testing!